New Charlotte-Mecklenburg Schools policy: AI can enhance learning if used responsibly

As artificial intelligence seeps into daily life, the Charlotte-Mecklenburg Board of Education aims to incorporate the emerging technologies into the classroom, but with caution.

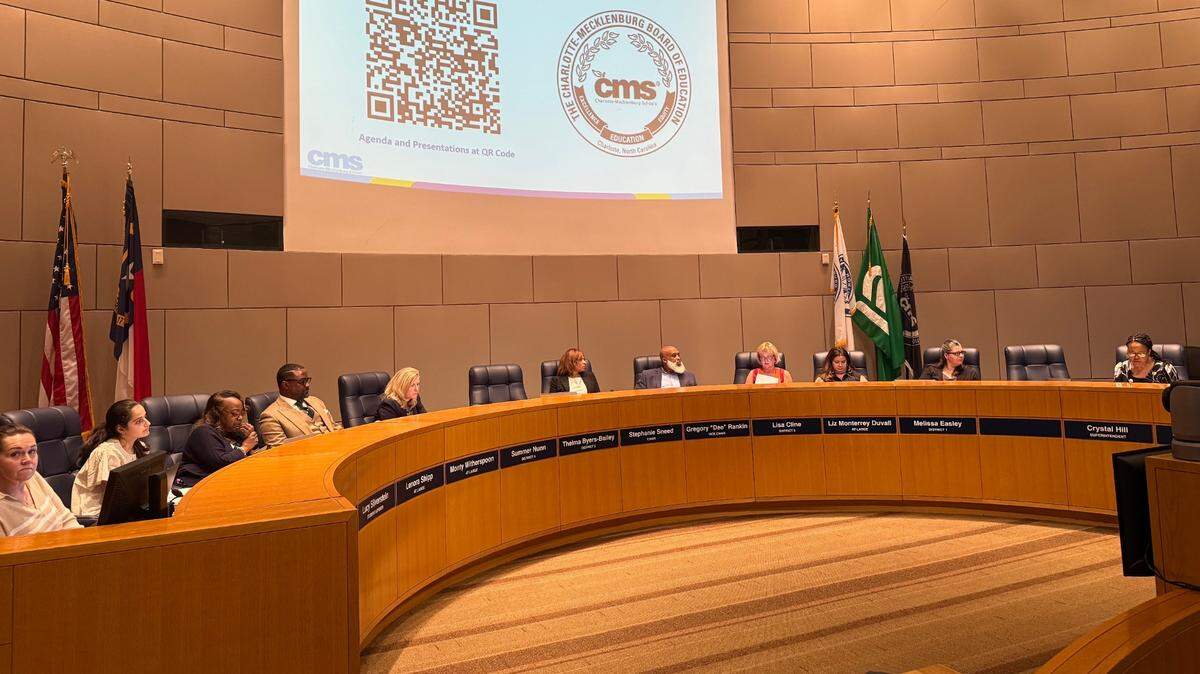

Thursday, the district introduced its proposed new artificial intelligence policy, which says AI should be used with the “goal of enhancing learning and teaching” but must “never replace human interaction, creativity or decision-making.”

The board’s policy committee considered the draft at its meeting Sept. 10 before bringing it to the full board Thursday for its first reading.

“The policy highlights the importance of the responsible and ethical use of AI within our district as our students and staff learn to work with this emerging technology,” Board Vice Chair Dee Rankin said. “It aims to ensure that AI systems introduced into the district align with educational goals, comply with privacy laws, are accessible to our diverse student and staff population and that AI bias is mitigated whenever possible.”

The board will vote on the policy in October, after a public hearing. If approved, the policy committee recommended the policy be reviewed annually, due to the rapidly-evolving landscape of AI technology.

The 5 main takeaways

- All AI technologies used in the district, whether a commercial product or created by CMS, must first be reviewed by a new AI Review Committee.

The committee would be formed by Superintendent Crystal Hill or someone she appoints. When evaluating technology, the committee must consider a particular set of factors, including alignment with the district’s curriculum, data privacy, security and ethical considerations, compatibility with existing systems and potential for bias.

After a technology is approved, the AI Review Committee must continue “regular” audits and evaluations as well as data collection and analysis on the performance of AI systems. The current draft of the policy does not lay out a specific time frame for audits and evaluations.

- The district would have mandatory training programs for all AI users, including students, teachers and staff.

The drafted policy also says the district would need to maintain a centralized repository of all its AI systems, including user guides, training materials and relevant documents. The district would also need to create supplementary resources to help teachers incorporate AI into the classroom.

CMS also would be required to notify parents of the use of AI in their child’s education and provide updates on its impact.

- Students would only be allowed to use AI for “authorized educational purposes.”

Whenever a student does use AI as allowed, they must cite it. If a student breaks the district’s AI policies, they may lose AI privileges or see additional disciplinary consequences as outlined in the Student Code of Conduct

- The policy prohibits the use of AI tools to make final decisions of any kind in CMS without “independent human judgment and oversight.”

That includes decisions around academic placement, special education eligibility, disciplinary actions, employee evaluations or employment.

- Uploading private information to publicly available generative AI would be prohibited without the Chief Technology Officer signing off first.

This is to protect student and staff data privacy. Users would also be prohibited from uploading any confidential information, including student data, into the district’s AI systems unless the system has been specifically approved for that purpose.

In the event of a data privacy breach, the district would be required to “promptly” notify all affected individuals and relevant authorities.

This story was originally published September 26, 2025 at 6:15 AM.